Solutions

Global AI delivers vertically integrated sovereign AI infrastructure — purpose-built for nations, enterprises, and frontier AI developers who require dedicated, secure, and scalable compute at hyperscale.

Our facilities are engineered from the ground up around the world's most advanced GPU systems — supported by on-site power generation, direct-to-chip liquid cooling, and air-gapped network isolation where required.

NVIDIA GB200 NVL72

NVIDIA GB200 NVL72

Details:

The NVIDIA GB200 NVL72 is a rack-scale, liquid-cooled supercomputer combining 36 Grace CPUs and 72 Blackwell GPUs in a single unified NVLink domain. Built on the Blackwell architecture with 208 billion transistors on TSMC 4NP, it operates as a single massive GPU — delivering exascale AI compute in one rack.

Each GB200 Grace Blackwell Superchip connects two B200 Tensor Core GPUs to an NVIDIA Grace CPU via a 900 GB/s NVLink-C2C interconnect, enabling memory-coherent compute across the full cluster.

Highlights

H100 GPU

Key Details

- 72 Blackwell GPUs + 36 Grace CPUs — rack-scale unified compute

- 1.44 ExaFLOPS FP4 Tensor performance per rack

- 13.5 TB HBM3e memory per rack

- 576 TB/s total memory bandwidth

- 130 TB/s NVLink bisection bandwidth

- 208 billion transistors — TSMC 4NP process

- ~120 kW power draw, fully liquid-cooled

- 800 Gb/s networking via Quantum-X800 InfiniBand / Spectrum-X800 Ethernet

- Supports FP4, FP8, BF16, FP16, FP32, FP64 precision formats

- Includes BlueField-3 DPUs for zero-trust security & network acceleration

NVIDIA GB300 NVL72

NVIDIA GB300 NVL72

Details:

The NVIDIA GB300 NVL72 is the next evolution of rack-scale AI infrastructure — a liquid-cooled system combining 72 Blackwell Ultra GPUs and 36 NVIDIA Grace CPUs in a single 72-GPU NVLink domain. Built on the Blackwell Ultra architecture, it delivers 1.5x more AI compute FLOPS than Blackwell and is purpose-built for the age of AI reasoning and test-time scaling.

Each Blackwell Ultra GPU features 288 GB of HBM3e memory and new Tensor Core technology with 2x attention-layer acceleration. With up to 40 TB of total fast memory per rack and 800 Gb/s per GPU networking via ConnectX-8 SuperNIC, the GB300 NVL72 is engineered for multi-trillion-parameter models and frontier AI factories.

Highlights

Key Details

- 72 Blackwell Ultra GPUs + 36 Grace CPUs — rack-scale unified compute

- 1,440 PFLOPS FP4 — 1.5x more AI compute FLOPS than Blackwell GB200

- 288 GB HBM3e per GPU — up to 20 TB total HBM, 40 TB fast memory per rack

- 576 TB/s total memory bandwidth

- 130 TB/s NVLink 5 bisection bandwidth across all 72 GPUs

- 800 Gb/s per GPU via ConnectX-8 SuperNIC (Quantum-X800 InfiniBand / Spectrum-X Ethernet)

- 2x attention-layer acceleration vs. Blackwell

- Up to 132 kW power draw, fully liquid-cooled

- Supports FP4, FP8, FP6, BF16, FP16, TF32 precision formats

- Powered by NVIDIA Mission Control for AI factory orchestration

NVIDIA Vera Rubin NVL72 (Coming Soon)

NVIDIA Vera Rubin NVL72 (Coming Soon)

Details:

The NVIDIA Vera Rubin NVL72 is the next generation of rack-scale AI infrastructure, unifying 72 Rubin GPUs and 36 Vera CPUs in a single NVLink 6 domain.

Built on six co-designed chips — including ConnectX-9 SuperNICs and BlueField-4 DPUs — it treats the data centre as the unit of compute, purpose-built for agentic AI, deep reasoning, and gigascale inference.

Highlights

Key Details

- 72 Rubin GPUs + 36 Vera CPUs — rack-scale unified compute platform

- 3.6 ExaFLOPS NVFP4 inference / 2.5 ExaFLOPS NVFP4 training per rack

- 288 GB HBM4 per GPU — 20.7 TB total HBM4 per rack, 1.6 PB/s bandwidth

- 54 TB LPDDR5x coherent CPU memory per rack

- 260 TB/s NVLink 6 scale-up bandwidth — 2x the GB200's 130 TB/s

- 1.6 Tb/s per GPU networking via ConnectX-9 SuperNIC (Quantum-CX9 InfiniBand / Spectrum-6 Ethernet)

- 336 billion transistors per Rubin GPU — built on TSMC process

- 88-core Vera CPU with Spatial Multi-Threading (176 threads) + 1.8 TB/s NVLink-C2C

- 100% liquid-cooled, cable-free, fanless modular tray — 18x faster servicing vs. Blackwell

- First rack-scale platform with NVIDIA Confidential Computing across CPU, GPU & NVLink domains

We have real compute capacity available in 2026

Global AI Infrastructure

Intro to Global AI solutions, the vertical integration of their systems, their tech the lack of impact on the local area, etc.

On-Site Power Generation

Next-generation AI data centers and high-density compute environments require reliable, scalable power delivery beyond what traditional grid infrastructure was designed to support. Global AI integrates on-site power generation as a core component of its vertically integrated infrastructure, currently deploying Bloom Energy to provide efficient, modular baseload electricity directly at the facility. This architecture supports GPU-dense AI clusters while reducing dependence on regional grid capacity.

Global AI is also expanding its on-site power generation capabilities and evaluating additional technologies to further strengthen infrastructure resilience and scalability.

- Grid flexibility — reduces reliance on constrained regional grid capacity while maintaining stable power delivery for AI infrastructure.

- Operational resilience — fuel-cell systems provide continuous baseload power for long-duration AI training and inference workloads.

- High-density compute support — designed to power GPU clusters operating at 100+ kW per rack and beyond.

- Energy efficiency — fuel cells avoids traditional combustion, delivering high electrical efficiency within a compact footprint.

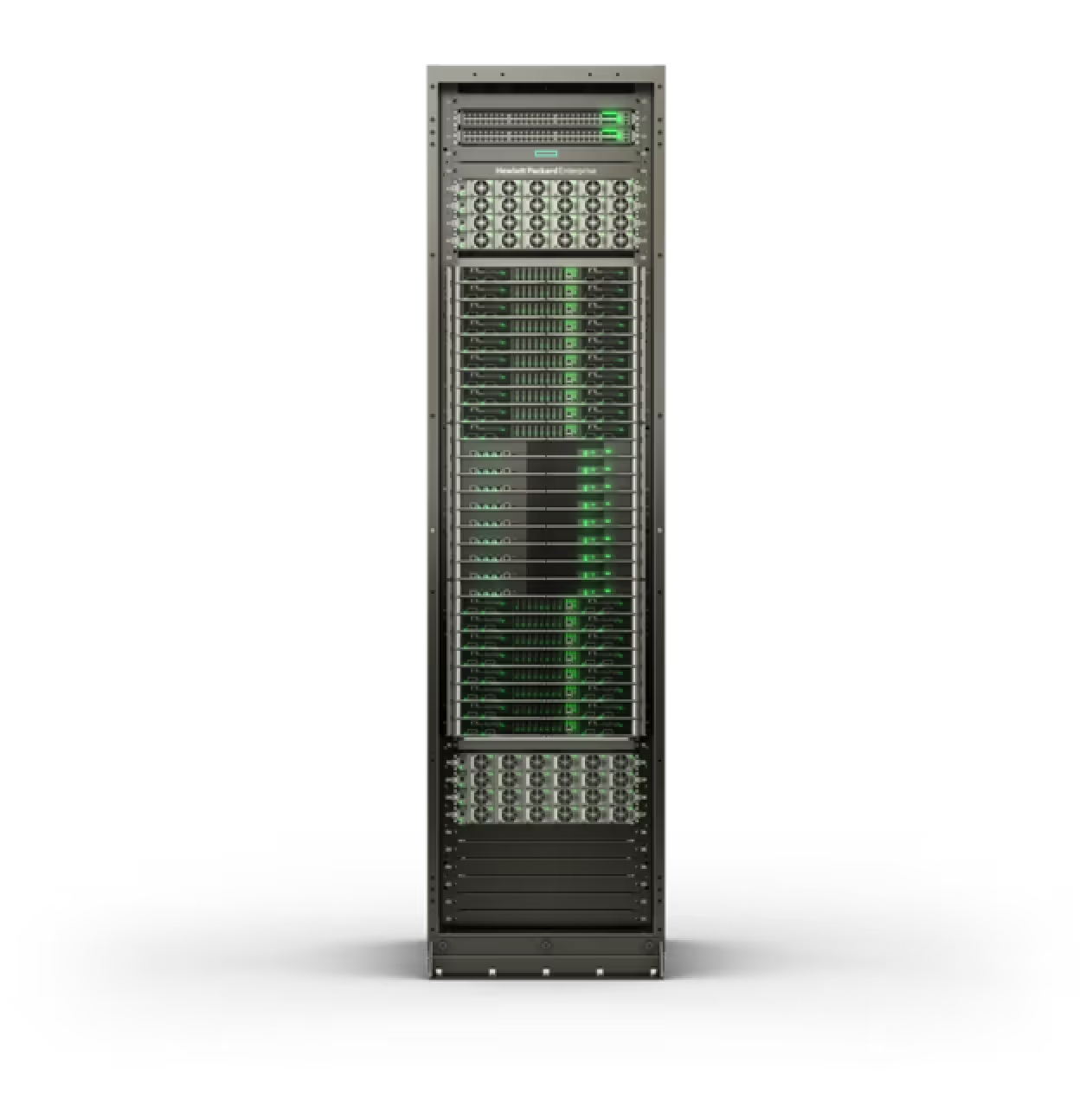

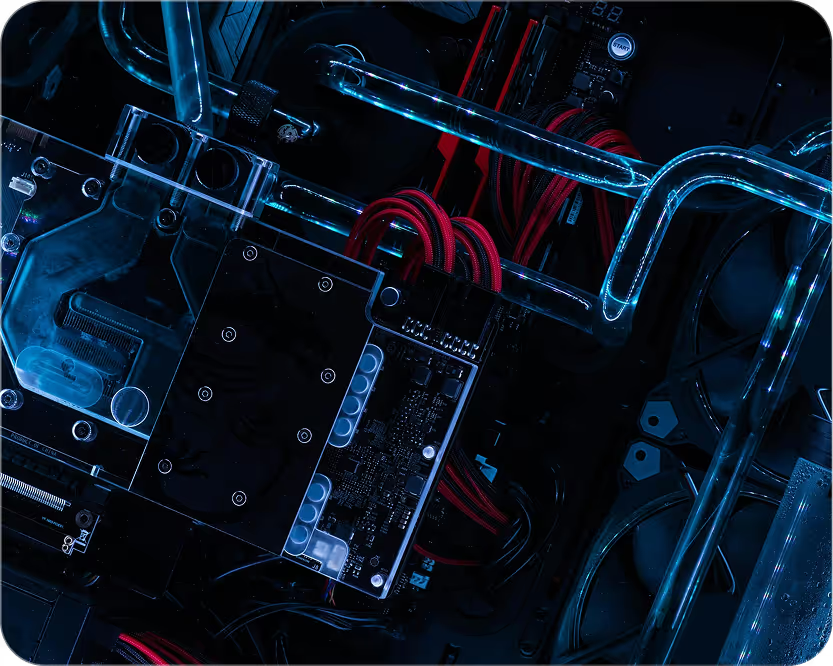

Liquid Cooling Infrastructure

Modern AI compute clusters generate thermal loads that cannot be effectively managed with traditional air-cooled data center designs. Global AI integrates direct-to-chip liquid cooling as a core component of its vertically integrated infrastructure, enabling stable thermal management for GPU-dense AI clusters and next-generation rack-scale systems.

- High-density compute environments — supports rack-scale AI systems operating at 100+ kW per rack and beyond.

- Thermal stability — maintains consistent operating temperatures for long-duration AI training and inference workloads.

- Energy efficiency — significantly reduces the power required for cooling compared with traditional air-cooled data centers.

- Infrastructure scalability — designed to support next-generation GPU platforms and increasing compute density.

Software Layers

Operating large-scale AI infrastructure requires tightly integrated software systems capable of orchestrating thousands of GPUs and complex AI workloads.

Global AI deploys a layered software architecture designed to support secure, high-performance AI operations across training, inference, and model development environments.

- Cluster orchestration — manages large-scale GPU environments and distributed AI workloads.

- Operational visibility — provides monitoring, performance management, and infrastructure control across compute environments.

- Secure workload management — ensures controlled access to compute resources and AI environments.

- AI development support — enables enterprises and nations to build, train, and deploy advanced AI models within sovereign infrastructure environments.

Air-Gapping

Certain AI workloads require infrastructure environments that are completely isolated from public networks and shared cloud platforms. Global AI environments can be deployed as fully air-gapped infrastructure, physically separated from external internet connectivity and public cloud environments.

This architecture ensures that AI workloads operate within secure, sovereign compute environments.

- Compute sovereignty — full control over infrastructure used to train and run AI models.

- Model protection — prevents external access to proprietary models and training datasets.

- Operational security — eliminates exposure to public internet infrastructure.

- Single-tenant environments — ensures dedicated compute infrastructure with complete physical and operational isolation.